The tool you keep switching away from

Nine words, twenty minutes, and the question I had been failing to ask for fifteen years

There is a particular kind of browser tab arrangement that has become the natural habitat of the modern content creator. ChatGPT in one tab. Claude in another. Gemini somewhere on the right, because Google bundled it into a subscription you were already paying for and the cancellation flow involves three confirmation screens and a survey. Perplexity in a fourth tab, because it sounds research-flavoured and you saw a thread about it. The same question gets asked across all four, the answers get triangulated like witness statements at a crime scene, and the final selection is whichever one sounds least like a press release written by an intern.

This is the workflow. It is also a small ongoing nervous breakdown that the participants have agreed to call due diligence.

I want to make a case for the opposite. Pick one pro-tier AI tool. Stay with it. Let it get to know you the way a regular barista knows your order, except the order is your entire working life and the barista has a 200,000-token context window. Stop refreshing the launch announcements. The features you are chasing converge within a fortnight of any major release anyway, because the underlying research moves through the industry like gossip through a small town, and the thing you actually need from these tools is not the latest reasoning benchmark.

It is depth of working relationship. Which is the one thing four tabs cannot give you.

A screenshot, a vague question, twenty minutes

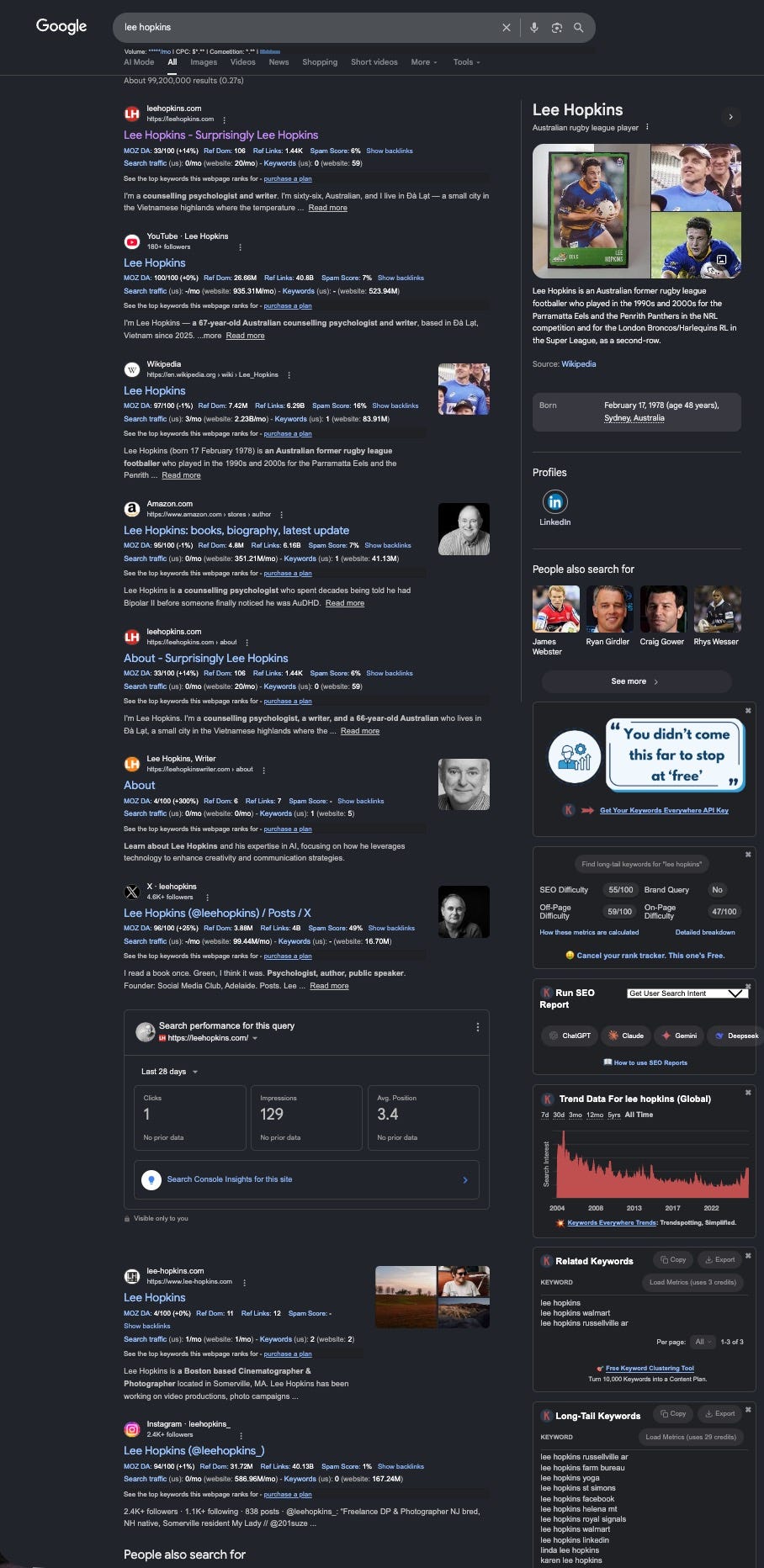

Yesterday I uploaded a screenshot of my own Google search results to Claude and asked: what does today’s Google Search tell us about how the world might be finding me? That was the whole prompt. Nine words. No briefing document, no persona instructions, no carefully constructed examples of the output I wanted. The kind of prompt that, fed to a stranger, would produce either confusion or a description of what was visible in the image, which is what stranger-grade tools tend to do when asked to think.

What came back was not a description of the image. Anyone with functioning eyes can describe an image. What came back was a strategic analysis of a discoverability problem I had been quietly losing for fifteen years without noticing I was in a fight.

The Knowledge Panel on the right side of the page, the analysis observed, belongs to an Australian rugby league player born in 1978. The four faces in the People Also Search For carousel are also rugby players. Google’s machine-readable understanding of the phrase Lee Hopkins lives in a sports context, and any unqualified search for my name lands in a sports context before it lands in mine. I have been a counselling psychologist for two decades and a writer for longer, and Google believes I am, in some essential sense, a second-row forward who retired in 2009.

Then it noted the Amazon author page snippet does real positioning work, because the line about decades of being told I had Bipolar II before someone noticed AuDHD is a self-selection mechanism. Anyone who clicks that link is already half mine. Then it noted the global search trend for my name peaks in 2007 to 2010 and declines steadily through to 2026, which is, with depressing accuracy, the shape of the social media evangelist era cresting and the post-burnout retreat. The graph does not know my biography. It has inferred it from search volume, which is a sobering thing to have done to you by a chart.

Then, and this is the part that mattered, it asked a question I had not asked myself. Are you trying to be found by name, or trying to be found by problem? The people who would benefit from my writing are not searching for Lee Hopkins. They are searching for a description of what is happening to them at two in the morning when nothing feels right and the words for it have not yet arrived. The book title Harder Than It Should Be is, in that sense, already a search query. The work was already shaped like the answer. I had been optimising for the wrong question for years.

I did not prompt any of this. Nine words went in. Twenty minutes of strategic redirection came out, calibrated to who I am, what I am building, who I write for, and the contrarian register I write in. None of that fit in the prompt. None of it needed to.

Why this is impossible without continuity

A new tool, on day one, would have given me a list of SEO recommendations. It would have suggested meta descriptions, backlink strategies, featured snippet optimisation, and almost certainly a podcast, because every AI tool will eventually suggest a podcast the way every GP will eventually suggest you drink more water. It would have done a competent job of being useless, because what I needed was not SEO advice. What I needed was a tool that could look at the screenshot and tell me which question to ask next.

The continuity is the product. Not the model weights, not the context window, not the reasoning benchmarks that founders wave around at launches like report cards from a school nobody attended. The accumulated working relationship. The fact that nine-word prompts produce twenty-minute analyses because the tool already knows the shape of the work.

Every time you move to a new platform, you reset that relationship to zero. The first three weeks are spent re-explaining yourself, like the opening minutes of every therapy session with a new clinician, except you are also paying for the privilege and getting strategically generic answers in return. You blame the tool, switch to the next one, and start the cycle again. The four-tab arrangement is what this cycle looks like when it has been running long enough to feel normal.

What the system wants from you

The AI tool industry has an interest in keeping you switching. A switcher pays four subscriptions and uses each one badly. A switcher generates churn metrics that look, on a dashboard, exactly like engagement. A switcher never builds the depth of working relationship that would let them notice when a tool is genuinely useful versus when it is performing usefulness with the conviction of a soap opera actor in a flashback sequence.

Every fortnight a new model launches, a new benchmark gets quoted, a new founder explains in measured tones that the previous generation is now obsolete. The benchmarks measure things that mostly do not matter to a working writer. They measure whether the model can solve a maths olympiad problem, which is impressive in the way a dog walking on its hind legs is impressive, and roughly as useful for writing a Substack essay. The launches are theatre. Most of the time the audience is the investor base, and the rest of us are watching from the cheap seats wondering why we paid for a ticket.

I cancelled ChatGPT eighteen months ago. Not because the model was worse. The model was fine. The model was perfectly competent. I cancelled it because the relationship had become exhausting in the specific way that conversations with a deeply insecure person are exhausting. Compliments on every turn. Apologies for things that did not need apologising for. A nanny-state register that treated me as a liability to be managed rather than a writer to be helped. Every prompt acknowledged, every response prefaced, every correction met with the kind of effusive gratitude that signals someone has been trained, in the operant conditioning sense, into a personality I did not enjoy being in a room with.

I moved to Claude Max and stayed. Not because the benchmarks said to. The benchmarks were a wash. I stayed because the working relationship deepened to the point where the tool started doing things I had not asked it to do, in directions I had not thought to ask. That depth took months. It would have taken months on any platform. The question is whether you are willing to give one tool the months it needs, or whether you will keep starting over because something shinier launched and the launch video had good music.

The question for content creators

If you are running a Substack, a newsletter, a YouTube channel, a podcast, a freelance practice, or any other one-person content operation held together with subscription software and personal stubbornness, the question is worth sitting with. How much of your week is going to tool comparison? How much of your output time is spent re-explaining your voice, your audience, your editorial constraints, your project structure, and the fact that you write in Australian English even though three of the four tools default to American? How often do you ask the same question across three platforms and pick the answer that flattered you most, then tell yourself that was triangulation?

And then the harder question. If you stayed with one tool for a year, no comparison shopping, no benchmark-chasing, no fortnightly migration to the latest release, would your work be better or worse? I keep coming back to my own answer, which is better, and not by a small margin.

The screenshot analysis I described would not have happened on a new platform. It happened because the tool knew I write contrarian nonfiction in an Australian voice, that I run a Substack called Quiet Half, that my discoverability problem is strategic rather than vain, that I have spent twenty-five years building digital infrastructure under my own name, and that the rugby player is an old joke I have been telling for a decade. None of that was in the prompt. The prompt was nine words. The relationship carried the rest.

A practical suggestion

Pick one. Claude, ChatGPT, Gemini, whichever survives your honest audit. Pay for the pro tier. Cancel the others, including the one you cannot quite remember signing up for and the one that gets bundled into something else. Do it for six months. Notice what changes. Notice how much of your week comes back. Notice when the tool starts surprising you in useful directions, and notice the day you stop reading the launch announcements, because that day will arrive and it will feel like the moment you stopped checking your phone during a conversation.

Then notice the version of yourself who used to keep four tabs open, asking the same question four times, looking for the answer that felt least like marketing copy. That person was working harder than they needed to. The tool was never the problem.

The switching was the problem. The contrarian move here is not which tool to choose. It is to stop choosing, and let one tool know you well enough to be useful on a Thursday morning when, in Saigon airport, you upload a screenshot, ask nine words, and need someone to tell you which question to ask next.